There has been a lot of talk in the industry in last couple of years around SMART-NICs. In this blog, we share our perspective on this new class of network accelerators and the role they play in the future of compute platforms. SMART-NICs are expected to play a key role in compute platforms for large Data Centers, Edge and Telco 5G environments.

There is a general trend in the industry around building compute platforms that consist of general purpose CPUs coupled with dedicated accelerators (SOCs and ASICs). The industry also refers to these as Domain Specific Architectures, where a host processor is used to set things up and accelerators do the compute processing for the specific problem domain. A number of factors are driving the adoption of this hybrid computing architecture:

- The increasing number of cores in the CPUs have improved the CPU performance, but memory and I/O bandwidth improvements haven’t kept up with it. Combining CPUs with accelerators moves some of the processing to accelerator cards without transferring the data back and forth between memory, IO and CPU.

- As network speeds and disk performance are increasing, some of the associated processing (network services and data processing / analytics) is moving closer to network in the form of FPGAs to avoid sending un-necessary data to host memory.

- Telcos are moving towards virtualizing the network edge with evolution to 5G. Hardware acceleration will play a key role in the 5G architecture for network services offload, 5G network slicing and real time data processing.

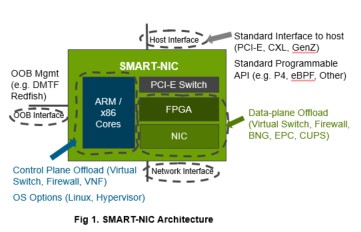

SMART-NICs are a class of accelerators which consist of a standard NIC (Network Interface Controller) combined with FPGA and CPU cores (ARM or x86 cores), as shown in Fig 1. These are expected to play a key role in future system architecture because most of the infrastructure services and applications are or will be network connected. Some examples of infrastructure services are network services (virtual switches, firewalls, load balancers, Telco virtual network functions, SD-WAN), storage services (SDS software for block, file, object storage), analytics, and machine learning. These infrastructure services moved to software defined architectures with SDN (Software Defined Networking), SDS (Software Defined Storage) and distributed data analytics (Hadoop, Spark).

As network speeds are increasing, there is a need to move the associated network processing from host CPU to network adapters in order to keep up with data rates and reduce the amount of data sent to IO bus and host memory for processing. Hypervisor resident virtual switches provide a number of functions including data movement, virtual switching overlay, encryption, deep packet inspection, load balancing and firewall. It is hard to scale these features to future network speeds of 50/100/200G. FPGA and NIC on the SMART-NIC (Fig 1) enable this data plane offload to scale to higher throughput, lower latency and higher packets per second (pps) performance. Other higher level network services and Telco virtual network functions (VNFs) are also starting to leverage the FPGA and NIC ASIC to offload data plane processing and new features like network slicing for 5G.

Some of the data plane functions also benefit from moving associated control plane closer to the data plane, leading to embedded CPU cores on SMART-NICs. What are the benefits of doing this?

- Support of any OS running on the host CPU and enablement of bare-metal containers.

- Stronger security – embedded signed images can be delivered for software on SMART-NIC and it is independent of any security attack on the OS or applications running on host CPU.

- Software running on the SMART-NIC can isolate a server from rest of the network if a security threat is detected.

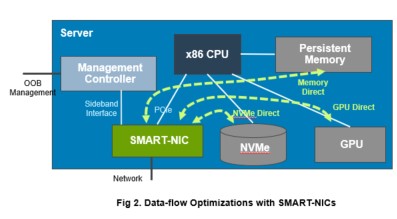

The offload of network functions via SMART-NIC enables opportunity to further optimize the data-flows in overall compute system as shown in Fig 2. Software running on SMART-NIC can enable direct data transfers to server storage and GPUs without using host memory as a staging area for data transfer, thus improving performance, reducing latency and freeing up host CPU cycles.

A number of industry level standardization efforts are needed to develop open APIs so that a SMART-NIC from any vendor can be used to accelerate any workload such as:

- Standardization of data plane interface for applications to offload data plane processing.

- Standardization of interfaces for software life cycle management of SMART-NICs.

- Standardization of hardware management and monitoring of SMART-NICs via DMTF Redfish interfaces.

These SMART-NICs will play a key role in compute platforms for both Data Center and Edge. In data centers, SMART-NICs enable workloads to scale to higher network speeds. They also free up the host CPU cores and reduce memory consumption and IO bus utilization by moving CPU and data intensive computing to hardware. In Edge deployments, SMART-NICs enable movement towards single socket servers instead of current dual socket platforms, and new features like network slicing for 5G, Telco VNF Acceleration, content distribution, image processing and Machine Learning Inferencing.

Due to importance of this hybrid architecture for next generation workloads, CPU vendors, namely Intel, has also evolved from processor point of view to a system point of view with investments in FPGAs, SMART-NICs, GPUs and Co-processors. This change will enable next generation of highly optimized Edge and 5G deployments to be based on x86 compute platforms and SMART-NICs coupled with high speed persistent memory and storage.

Dell Technologies is leading the innovation in future hybrid system architectures with FPGAs, SMART-NICs, GPUs and other SoC based multi-core accelerators while working on standardization of APIs and frameworks. Dell Technologies is partnering with Telecommunication Service Providers bringing leading technology on our journey to 5G.