Data Domain: OS Upgrade Guide for Highly Available (HA) systems

Summary: This article provides a process overview for Data Domain Operation System (DDOS) upgrades on Data Domain Highly Available (HA) pairs.

This article applies to

This article does not apply to

This article is not tied to any specific product.

Not all product versions are identified in this article.

Instructions

Table of Contents:

- HA system planned maintenance

- Before starting

- Rolling upgrade using the GUI

- Rolling upgrade using the command line

- Local upgrade

HA system planned maintenance

In order to reduce planned maintenance downtime, the HA architecture includes a system rolling upgrade function. This upgrades the standby node first and then uses an HA failover to move the services from the active node to the standby node. Finally, the previous active node is upgraded and rejoins the HA cluster as the standby node. All these processes are done in one command.

An alternative manual upgrade approach is the "local upgrade." Manually upgrade the standby node first, and then manually upgrade the active node. Then the standby node rejoins the HA cluster. Local upgrades can be performed either for regular upgrades or fixing issues.

Some system upgrade operations require data conversion or index rebuilds on the active node. Do not start these operations until both nodes are upgraded to the same level and HA state is fully restored.

Data Domain File System (DDFS) availability during a rolling upgrade:

- The standby node is upgraded first, then reboots to the new version. This takes roughly 20-30 mins depending on various factors. The DDFS is up and operates on the active node during this period without any performance degradation

- After the new DDOS is applied, the system fails over to the upgraded standby node, making it the new active node. This takes roughly 10 mins (again, depending on various factors).

- One significant factor is disk enclosure (DAE) firmware upgrades. This can add around 20 mins more downtime depending on how many DAEs are configured. Refer to this article to determine if a DAE firmware upgrade is required. Starting with DDOS 7.5 there is an enhancement to enable online DAE firmware upgrades, eliminating this concern.

- Engage your account team to discuss factors that could impact upgrade times. Depending on the client OS, applications, and protocols used, client workloads may need to be manually resumed right after failover. For example, if a system is backing up DD Boost clients and the failover takes more than 10 mins, the clients time out and the user must manually resume workloads. However, there are usually tunable settings available on clients to set timeout values and retry times.

- The DDFS is down during the failover period. Monitor the output of the "

filesys status" command on the upgraded node to confirm if the DDFS service is resumed or not. - After the failover, the previously active node starts upgrading. After the upgrade is complete, the node reboots to the new version and then rejoins the HA cluster as the standby node. The DDFS service is not impacted during this process as it was already resumed above.

Before starting:

- Review this article: DDHA Upgrade Pre-Check

- Stop all cleaning, data movement, replication, and backup activity.

- Perform a manual failover twice (optional). The expected file system downtime for each failover is around 10 minutes.

- Confirm that HA status is "highly available." Rolling upgrades cannot be run if HA is degraded.

- Upload the upgrade RPM file:

- Using SCP

- Using CIFS

- For a rolling upgrade, upload the RPM to the active node only.

- For a local upgrade, upload the RPM to both nodes.

Rolling upgrade using the GUI:

Prepare the system for upgrade:

- Start the precheck on the active node. The upgrade should be stopped if it encounters any errors.

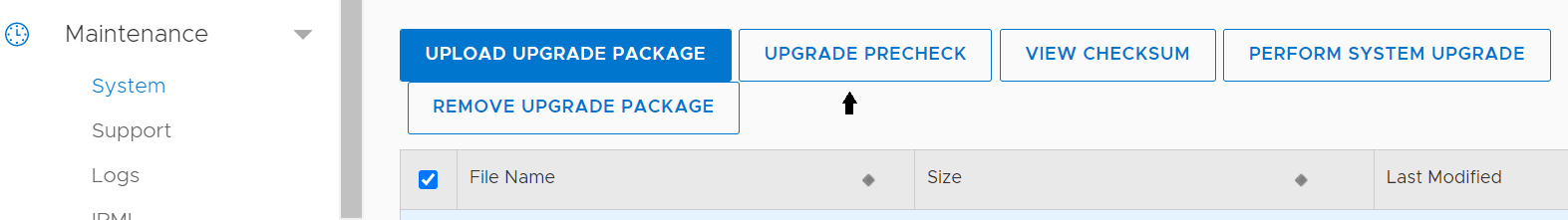

- Go to Maintenance > System.

- Check the box for the required RPM file.

- Click the "UPGRADE PRECHECK" button.

- If the precheck finishes without any issues, proceed with the rolling upgrade on the active node.

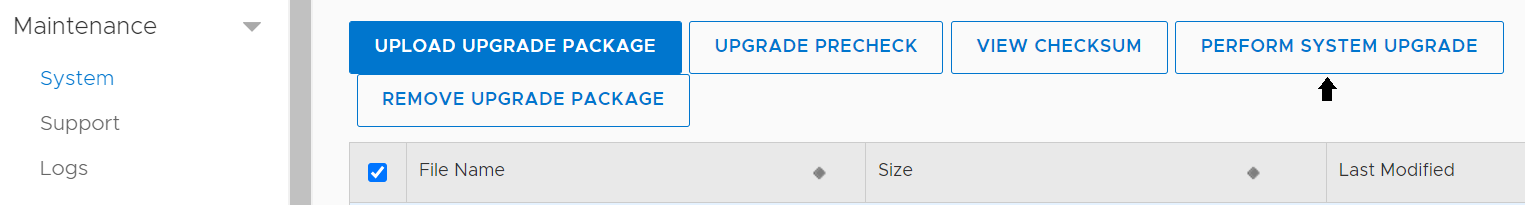

- Go to Maintenance > System.

- Check the box for the required RPM file.

- Click the "PERFORM SYSTEM UPGRADE" button.

- Wait for the rolling upgrade to finish. Do not start any HA failover operations before it finishes.

- After the rolling upgrade finishes, go to the IP address of the former standby node (Node1 in these examples) in a web browser and log in.

- Go to Health > Alerts and check for any unexpected alerts.

- At this point, the rolling upgrade has finished successfully.

Rolling upgrade using the command line:

- Run the precheck on the active node. The upgrade should be stopped if it encounters any error.

Active-node # system upgrade precheck <rpm file> Upgrade precheck in progress: Node 0: phase 1/1 (Precheck 100%) , Node 1: phase 1/1 (Precheck 100%) Upgrade precheck found no issues. - If the precheck finishes without any issues, start the rolling upgrade on the active node.

Active-node # system upgrade start <rpm file> The 'system upgrade' command upgrades the Data Domain OS. File access is interrupted during the upgrade. The system reboots automatically after the upgrade. Are you sure? (yes|no) [no]: yes ok, proceeding. Upgrade in progress: Node Severity Issue Solution ---- -------- ------------------------------ -------- 0 WARNING 1 component precheck script(s) failed to complete 0 INFO Upgrade time est: 60 mins 1 WARNING 1 component precheck script(s) failed to complete 1 INFO Upgrade time est: 80 mins ---- -------- ------------------------------ -------- Node 0: phase 2/4 (Install 0%) , Node 1: phase 1/4 (Precheck 100%) Upgrade phase status legend: DU : Data Upgrade FO : Failover .. PC : Peer Confirmation VA : Volume Assembly Node 0: phase 3/4 (Reboot 0%) , Node 1: phase 4/4 (Finalize 5%) FO Upgrade has started. System will reboot. - After the standby node (node 1) reboots and becomes accessible, log back in to it to monitor the upgrade progress.

Node1 # system upgrade status Current Upgrade Status: DD OS upgrade In Progress Node 0: phase 3/4 (Reboot 0%) Node 1: phase 4/4 (Finalize 100%) waiting for peer confirmation - Wait for the rolling upgrade to finish. Until it does, do not start any HA failover operations.

Node1 # system upgrade status Current Upgrade Status: DD OS upgrade Succeeded End time: 20xx.xx.xx:xx:xx - Check the HA status. Confirm that both nodes are online and HA status is "highly available."

Node1 # ha status detailed HA System name: HA-system HA System Status: highly available Interconnect Status: ok Primary Heartbeat Status: ok External LAN Heartbeat Status: ok Hardware compatibility check: ok Software Version Check: ok Node Node1: Role: active HA State: online Node Health: ok Node Node0: Role: standby HA State: online Node Health: ok Mirroring Status: Component Name Status -------------- ------ nvram ok registry ok sms ok ddboost ok cifs ok -------------- ------ - Confirm that both nodes have the same DDOS version.

Node1 # system show version Data Domain OS x.x.x.x-12345 Node0 # system show version Data Domain OS x.x.x.x-12345 - Check for any unexpected alerts.

Node1 # alert show current Node0 # alert show current - At this point, the rolling upgrade has finished successfully.

Local upgrade:

- Check the HA status. A local upgrade can still work even if the status is "degraded."

# ha status HA System name: HA-system HA System status: highly available <- Node Name Node id Role HA State --------- ------- ------- -------- Node0 0 active online Node1 1 standby online --------- ------- ------- -------- - If the HA status is "highly available," run the precheck on the active node. If it is degraded, run the precheck on both nodes.

Active-node # system upgrade precheck <rpm file> Upgrade precheck in progress: Node 0: phase 1/1 (Precheck 100%) , Node 1: phase 1/1 (Precheck 100%) Upgrade precheck found no issues.Active-node # system upgrade precheck <rpm file> local Upgrade precheck in progress: Node 0: phase 1/1 (Precheck 100%) Upgrade precheck found no issues. Standby-node # system upgrade precheck <rpm file> local Upgrade precheck in progress: Node 1: phase 1/1 (Precheck 100%) Upgrade precheck found no issues. - Take the standby node offline.

Standby-node # ha offline This operation will cause the ha system to no longer be highly available. Do you want to proceed? (yes|no) [no]: yes Standby node is now offline.- If this command fails or HA status is already degraded, continue with the local upgrade. Later steps may address these failures.

- Confirm that the standby node's status is offline.

Standby-node # ha status HA System name: HA-system HA System status: degraded Node Name Node id Role HA State --------- ------- ------- -------- Node0 0 active degraded Node1 1 standby offline --------- ------- ------- -------- - Start the upgrade on the standby node. The node reboots during this process.

Standby-node # system upgrade start <rpm file> local The 'system upgrade' command upgrades the Data Domain OS. File access is interrupted during the upgrade. The system reboots automatically after the upgrade. Are you sure? (yes|no) [no]: yes ok, proceeding. The 'local' flag is highly disruptive to HA systems and should be used only as a repair operation. Are you sure? (yes|no) [no]: yes ok, proceeding. Upgrade in progress: Node 1: phase 3/4 (Reboot 0%) Upgrade has started. System will reboot. - The standby node reboots into the new version of DDOS, but stays offline.

- Check the system upgrade status. It can take 30 minutes or more to complete the upgrade.

Standby-node # system upgrade status Current Upgrade Status: DD OS upgrade Succeeded End time: 20xx.xx.xx:xx:xx - Check HA status. Confirm that the standby node (Node1 in the below example) is offline, and HA status is "degraded."

Standby-node # ha status HA System name: HA-system HA System status: degraded Node Name Node id Role HA State --------- ------- ------- -------- Node0 0 active degraded Node1 1 standby offline --------- ------- ------- -------- - Start the local upgrade on the active node. The node reboots during this process.

Active-node # system upgrade start <rpm file> local The 'system upgrade' command upgrades the Data Domain OS. File access is interrupted during the upgrade. The system reboots automatically after the upgrade. Are you sure? (yes|no) [no]: yes ok, proceeding. The 'local' flag is highly disruptive to HA systems and should be used only as a repair operation. Are you sure? (yes|no) [no]: yes ok, proceeding. Upgrade in progress: Node Severity Issue Solution ---- -------- ------------------------------ -------- 0 WARNING 1 component precheck script(s) failed to complete 0 INFO Upgrade time est: 60 mins ---- -------- ------------------------------ -------- Node 0: phase 3/4 (Reboot 0%) Upgrade has started. System will reboot. - Check the system upgrade status. It can take 30 minutes or more to complete the upgrade.

Active-node # system upgrade status Current Upgrade Status: DD OS upgrade Succeeded End time: 20xx.xx.xx:xx:xx - After the active node upgrade finishes, the HA system status still shows "degraded." Bring the standby node back online - this reboots the standby node. Skip this step if "

ha offline" was not run previously.Standby-node # ha online The operation will reboot this node. Do you want to proceed? (yes|no) [no]: yes Broadcast message from root (Wed Oct 14 22:38:53 2020): The system is going down for reboot NOW! **** Error communicating with management service. - The standby node reboots and rejoins the cluster. Afterwards, HA status should become "highly available" again.

Active-node # ha status detailed HA System name: Ha-system HA System Status: highly available Interconnect Status: ok Primary Heartbeat Status: ok External LAN Heartbeat Status: ok Hardware compatibility check: ok Software Version Check: ok Node node0: Role: active HA State: online Node Health: ok Node node1: Role: standby HA State: online Node Health: ok Mirroring Status: Component Name Status -------------- ------ nvram ok registry ok sms ok ddboost ok cifs ok -------------- ------ - Confirm that both nodes have the same DDOS version.

Node1 # system show version Data Domain OS x.x.x.x-12345 Node0 # system show version Data Domain OS x.x.x.x-12345 - Check for any unexpected alerts.

Node1 # alert show current Node0 # alert show current - At this point, the upgrade has finished successfully.

Note: If there are any issues with the upgrade, contact your contracted support provider for further instructions and assistance.

Additional Information

Rolling upgrade:

- A single failover is performed during the upgrade, so the nodes swap roles.

- Upgrade information continues to be held in

/ddr/var/log/debug/platform/infra.log, but there may be additional information in/ddr/var/log/debug/ha.log. - Upgrade progress can be monitored with the command '

system upgrade watch'.

Local node upgrade:

- A local node upgrade does not perform an HA failover.

- As a result, there is an extended period of downtime while the active node upgrades, reboots, and performs post-reboot upgrade activities. This is likely to cause backups and restores to time out and fail. A maintenance window must be allocated for local upgrades.

- Local upgrades can proceed even if HA status is "degraded."

- If a rolling upgrade fails unexpectedly, a local upgrade can be used instead.

Affected Products

Data Domain, DD OSArticle Properties

Article Number: 000009653

Article Type: How To

Last Modified: 30 Mar 2026

Version: 11

Find answers to your questions from other Dell users

Support Services

Check if your device is covered by Support Services.