PowerEdge XE: How to install packages for DCGMI troubleshooting. Red Hat Enterprise Linux and Rocky

摘要: Outline of how to for DCGM (NVIDIA Data Center GPU Manager) installation within Linux. This is for collecting DCGMI logs for troubleshooting in Red Hat Enterprise Linux and Rocky Linux. ...

說明

Pre-Requisites

To run DCGM the target host must include the following NVIDIA components, listed in dependency order:

- Supported NVIDIA Datacenter Drivers

- On Hyperscale Graphics Extension (HGX) hosts, the Fabric Manager, and NVSwitch Configuration and Query (NSCQ) packages

- DCGM Runtime and Software Development Kit (SDK)

For Red Hat or Rocky Releases:

Install the repository metadata and the CUDA GPG key:

[Replace x86_64 with "sbsa" for arm64 or replace with "ppc64le" for ppc64le if needed. Remove quotes]

Determine Distro:

distribution=$(. /etc/os-release;echo $ID`rpm -E "%{?rhel}%{?fedora}"`)

Update the repository metadata.

sudo dnf clean expire-cache

Now, install DCGM.

sudo dnf install -y datacenter-gpu-manager

On HGX hosts (A100/A800 and H100/H800), you must install the NVIDIA Switch Configuration if you want to poll the NVSwitches. Query the NSCQ library for DCGM to enumerate the NVSwitches and provide telemetry for switches. NSCQ must match the driver version branch (XXX) installed on the host. Substitute XXX with the needed driver branch in the commands below.

sudo dnf module install nvidia-driver:XXX/fm

Query the operating system for the driver version:

nvidia-smi

For this example, we use the following command since our driver version shows as 550:

sudo dnf module install nvidia-driver:550/fm

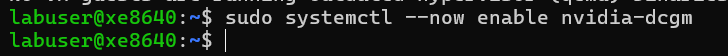

Enable the DCGM systemd service (on reboot) and start now:

sudo systemctl --now enable nvidia-dcgm

To verify installation, use dcgmi to query the host. You should see a listing of all supported GPUs (and any NVSwitches) found in the host: (the switch is a lower case L)dcgmi discovery -l

dcgmi discovery -l

[Example below does not have NvSwitches but the field populates with details if they are present or detected.]

sudo dnf config-manager \

--add-repo http://developer.download.nvidia.com/compute/cuda/repos/$distribution/x86_64/cuda-rhel8.repo