OpenShift: Manual prechecks are needed before updating OCP ingress certificate.

Summary: This KB introduces some potential reasons that can cause OCP nodes rebooting failure during updating the OCP ingress certificate and provides suggested prechecks to mitigate the potential failure. ...

Symptoms

When updating OCP ingress certificate, if a new root CA certificate is introduced to the OCP cluster, all OCP nodes will be rolling rebooted as part of the certificate update process.

Some manual prechecks are needed before updating OCP ingress certificate to mitigate the potential failure during node reboot.

Cause

- If pod(s)/VM(s) in the rebooting node cannot be evicted or migrated, the reboot will be blocked and fail the certificate update operation.

- If the pod number in each node is close to the maximum allowed pod number, the OCP cluster may become inaccessible during node rolling reboot.

Resolution

- How to check if root CA is going to change.

# retrieve certificates of current OCP cluster ingress

openssl s_client -showcerts -connect console-openshift-console.apps.<cluster_name.cluster_domain>:443 2>&1 </dev/null |sed --quiet '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p'

# the last certificate in above command's output should be the root CA certificate

# save the last certificate as a file named "existing_root_ca.pem" by retrieve content between "-----BEGIN CERTIFICATE-----" and "-----END CERTIFICATE-----" (both prefix and suffix need to be included)

# retrieve the new certificate's root CA certificate and save it as "new_root_ca.pem"

# change both pem files to der format

openssl x509 -outform der -in new_root_ca.pem -out new_root_ca.der

openssl x509 -outform der -in existing_root_ca.pem -out existing_root_ca.der

# check the shasum or other checksum to find if two ca root certificates are equal

shasum existing_root_ca.der new_root_ca.der

If the two root CA certificate are same, the nodes will not reboot during certificate update, you can skip below steps and exit this KB now.

If the two root CA certificate are different, please preform below precheck and suggested actions if any issue is identified. Red Hat OpenShift "oc" command line tool with cluster-admin role username and password should be prepared to login into OCP cluster before taking below steps.

Precheck and suggested actions:

- Check known node reboot prevention case(s):

- VM(s) in OCP cluster do not meet the migration condition: https://docs.openshift.com/container-platform/4.13/virt/live_migration/virt-live-migration.html

- pod(s) in OCP cluster do no t meet the migration or eviction condition, include but not limited to:

- pod in unready or unhealth status

- pod in resource racing status, such as PVC, HW resource

- pod with incorrect disruption budget configuration

- bad design or implementation pod/VM can also block the reboot.

If pod(s)/VM(s) migration issue is identified, try to remove or re-configure them to unblock reboot.

- Dry-run all OCP nodes eviction, run below command to check if there is issue of reboot block, it can also find potential reboot prevention risks:

oc adm drain <each-ocp-node-fqdn-or-ip> --ignore-daemonsets --delete-emptydir-data --force --dry-run=true |

It is expected that the dry-run passes without error like "node/<node_ip_or_fqdn> drained (dry run)". If there are error(s) reported, please fix those error(s) before proceeding the certificate update.

- Check OCP cluster workload.

# get maximum allowed pod number for each node

oc get node <each-ocp-node-fqdn-or-ip> -ojsonpath='{.status.capacity.pods}'

# get current running pod number in ocp cluster

oc get pods --no-headers -A|wc -l

# the maximum allowed pod number in ocp cluster equals to the summary of all nodes maximum allowed pod number.

# it is suggested that current running pod number in ocp cluster is less than 80% of the maximum allowed pod number in ocp cluster.

If high workload issue is identified (current running pod number in ocp cluster exceeds 80% of the maximum allowed pod number in ocp cluster), try to reduce some workload before updating certificate by:

- un-deploy workloads

- manually reschedule workloads to other nodes if pods are not necessary to adhere to nodes

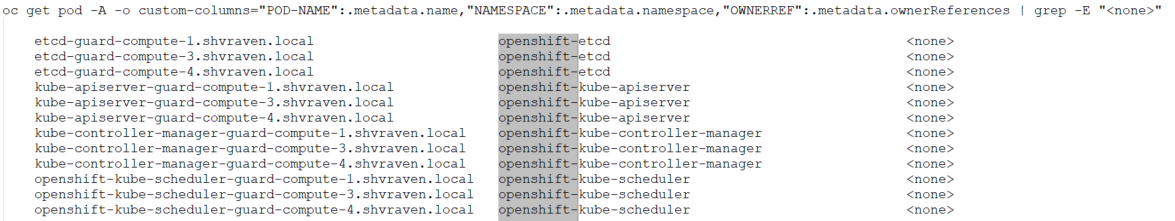

- Find potential orphan pods by below command.

oc get pod -A -o custom-columns="POD-NAME":.metadata.name,"NAMESPACE":.metadata.namespace,"OWNERREF":.metadata.ownerReferences | grep -E "<none>"

Orphan pods will be cleared during reboot.

It is ok if the command output shows pods with "openshift-" prefix namespace in the second column, they will be auto deployed after reboot.

If the command output shows other pods whose namespace do not contain "openshift-" prefix, please take a note of these orphan pods and re-deploy them after reboot if needed.