AI Solutions

How Dell’s AI Data Platform Keeps Your GPUs Fed

Key Takeaways: Storage-embedded AI stacks can lead to increased data movement and integration overhead, leaving GPUs underutilized. Dell AI Data Platform offers a much better approach that matches the reality that most of our customers face: an open, federated AI data foundation that operates on data where it lives, accelerates pipelines with GPUs and keeps your GPU investments working instead of waiting on data.

You’re investing heavily in GPUs. But as AI moves from pilots to production, the real bottleneck isn’t the GPU itself—it’s the data layer that feeds it.

Your unstructured and semi-structured data is scattered across filesystems, object stores, data warehouses, SaaS platforms and public cloud and hosted services. If your AI data platform can’t efficiently find, prepare and serve that data to GPUs, you risk overpaying for accelerators that sit idle while pipelines struggle to keep up.

New independent research¹ examines how different AI data platform designs shape real-world outcomes over time. The report compares Dell AI Data Platform to VAST AI OS across architecture, data movement, GPU utilization and RAG/search workflows.

VAST positions itself as a unified, storage-embedded AI stack: centralize data into a VAST DataSpace, tightly couple AI services with that storage and streamline operations through an integrated platform approach. On paper, that sounds compelling. In practice, the story changes.

For complex, hybrid environments, the findings show that a closed, storage-embedded stack can introduce new operational and data-movement constraints—while a federated, GPU-optimized data foundation from Dell helps keep your GPUs busier and deployment options more flexible.

Two paths for the AI data layer

The study highlights two fundamentally different ways to design your AI data layer:

-

- VAST Data AI OS: A storage-embedded AI stack that centralizes data into a vendor-controlled DataSpace and runs AI services on top of that storage.

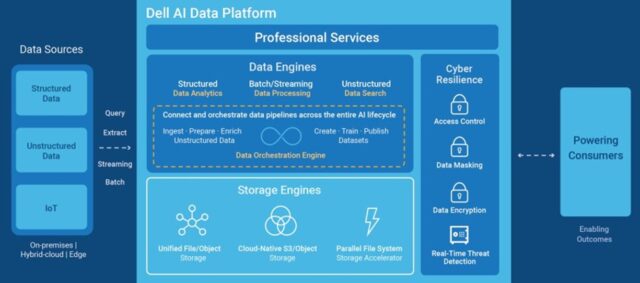

- Dell AI Data Platform: An open, modular AI data foundation built on Dell PowerScale and Dell ObjectScale storage and Dell compute, with a federated control plane for accessing and processing data wherever it already lives.

VAST positions its model as a way to simplify AI operations by consolidating data into a single, unified namespace. In practice, that means:

-

- Copying external datasets into VAST DataSpace.

- Using tools like VAST SyncEngine to move and synchronize data from your existing systems.

- Maintaining ongoing sync jobs as source data changes.

This can work well for greenfield AI deployments where most new data is born in VAST. But while your AI projects may be greenfield, your data estate isn’t—and as it grows, more and more critical data and operational risk accumulates inside a tightly coupled, storage-embedded stack.

Dell AI Data Platform takes a different approach. Instead of funneling data into a new silo, it federates access to your existing databases, data lakes, object stores and file systems. You connect to the data where it is, preserve your current governance and analytics tools and use the platform to orchestrate GPU-accelerated data preparation and retrieval across that landscape.

Operate your data where it lives, not where you’re forced to move it

One of the most overlooked cost drivers in enterprise AI is data preparation and enrichment. How quickly you can get the right data in the right shape often decides whether GPUs run at high utilization or sit idle.

Dell: Federated Access, Less Plumbing

Dell AI Data Platform blends its data engines and management tools with an architecture designed to work across the data sources you already use. Instead of gathering everything into a vendor-controlled repository, you and your team can:

-

- Prepare and reuse data in place, across databases, data lakes and object stores.

- Preserve existing governance and security practices.

- Scale storage and processing independently as needs evolve.

This federated model reduces time spent relocating data and cuts down the sync jobs and one-off pipelines that can bog your team and budget down.

VAST: Copy, Sync, and Maintain

VAST AI OS works best when data is already inside a VAST environment. When external data is required, organizations often rely on tools like VAST SyncEngine to move and synchronize datasets into a VAST DataSpace, then keep those copies fresh as sources change.

That means:

-

- More migration steps during initial onboarding.

- More sync jobs and copies to manage over time.

- Greater risk of drift between source systems and what models actually see.

In practice, the research finds that Dell’s federated approach helps shorten onboarding cycles, reduce ongoing integration overhead and keep GPUs busier by reducing delays caused by data movement.

Feed GPUs efficiently with accelerated, optimized pipelines

Scaling AI is not just about getting data to GPUs—it is about doing so efficiently, without saddling them with avoidable overhead.

Dell: Accelerated Data Processing and Analytics

Dell AI Data Platform integrates Apache Spark with NVIDIA RAPIDS to let data processing and AI workloads take advantage of GPU acceleration throughout the data pipeline. Its analytics engine, powered by Starburst, uses advanced file readers, autonomous indexing and intelligent reuse of common table expressions to eliminate redundant scans.

In benchmarked scenarios² reported by Dell and NVIDIA, this platform delivers:

-

- Up to 12x faster vector indexing.

- Up to 3x faster data processing.

- A 19x reduction in time-to-first-token vs traditional CPU-based workflows.

The independent report incorporates these benchmark results as evidence that Dell AI Data Platform can accelerate end-to-end AI pipelines, sustain higher GPU utilization and reduce cost per workload by optimizing the data path, not just the GPUs themselves.

VAST: More Work on the GPU Path

Even as VAST’s hardware foundation evolves, the research notes that its AI OS data processing stack still depends on software workflows that lack optimizations aimed at eliminating redundant transformations, excess memory movement and costly shuffle patterns.

Those inefficiencies can:

-

- Burden GPU pipelines

- Reduce overall utilization

- Increase the engineering effort required to tune and maintain performance

The net result: a greater risk that your GPUs spend time waiting on inefficient data prep, rather than continuously processing useful work.

RAG and search without a single-stack bottleneck

Similar trade-offs show up in RAG and search, where workloads are particularly sensitive to indexing speed, connector sprawl and pipeline fragility.

Dell: Data Search Engine for AI

Dell AI Data Platform includes a Data Search Engine powered by Elastic, designed specifically for AI use cases. It provides:

-

- Vector search and GPU-accelerated index creation.

- Connectors for common enterprise content sources.

- Hybrid keyword and vector retrieval in the same environment.

By combining GPU-accelerated search with built-in connectors, Dell’s platform helps teams deploy production-grade AI assistants and RAG workflows with fewer moving parts in the data path.

VAST: RAG on Data Inside the Stack

VAST AI OS supports search and retrieval through native indexing and vector embedding integrated within its data platform, operating on data it stores and manages. That unified namespace can be powerful, but only for data that has been pulled into the stack. Extending RAG to new or external sources typically requires additional integration and migration work, which can slow iteration and increase operational risk.

Choose a Data Platform That Keeps GPUs Working, Not Waiting

The report underscores a simple conclusion: where you place your AI data platform matters as much as which GPUs you buy.

A storage-embedded stack like VAST Data AI OS can look attractive for consolidating data and AI services, but it also concentrates integration work and dependency into a single environment. Over time, you take on more responsibility for copy management, sync jobs and RAG extensions—and you can still end up with GPUs that spend too much time waiting on data.

And because VAST must pair with separate server vendors, you’re effectively stitching together a multi-vendor stack. That adds another layer of operational complexity that Dell’s integrated approach avoids.

Dell AI Data Platform is designed around a different principle: keep data where it is and bring GPU-accelerated processing to it.

You get an open, federated AI data foundation that:

-

- Operates on data where it lives, reducing migrations and sync overhead.

- Accelerates pipelines with GPUs to keep your accelerators productive.

- Extends RAG and search across your existing, governed data sources.

Don’t starve your GPUs with a closed, storage-bound stack. Decide for yourself whether your AI data platform is truly built to keep your GPUs fed.

To see how the approaches compare in detail, read the full third-party report and work with your Dell team for a TCO and GPU-utilization analysis based on your own projected workloads and data sources.

1Based on an April 2026 Prowess Consulting Report, commissioned by Dell and based on publicly available sources, comparing architectural design, data-access patterns, RAG search capabilities, and operational factors between Dell AI Data Platform and VAST AI OS. Full report: here.

2Dell Technologies. “Dell AI Data Platform with NVIDIA Supercharges Enterprise AI with Breakthrough Data Orchestration and Storage Innovations.” PR Newswire. March 2026.